Query Azure VM Tags from Log Analytics

In the following example, I'll show you how to pull tag data for virtual machines, but this approach can be easily modified to support any type of Azure resource needed.

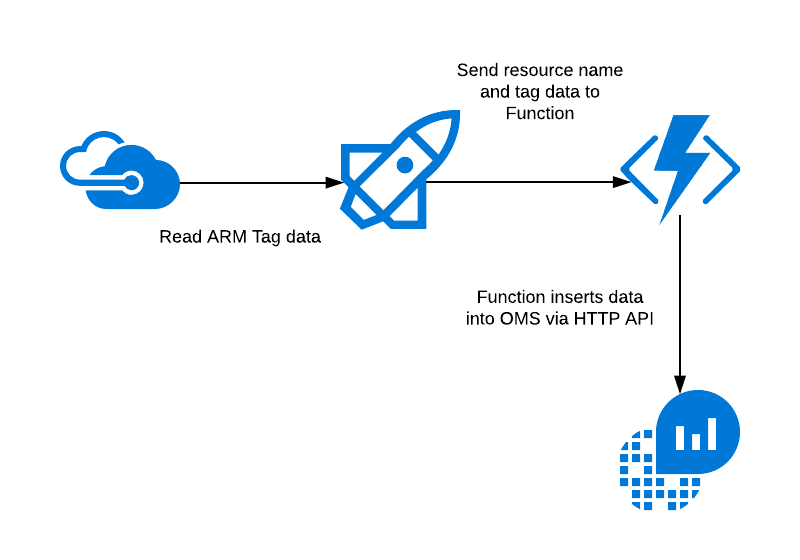

Solution Design

The basic outline of the solution is shown below. We'll be using a Logic App to poll and pull Azure resources every 15 minutes, extracting tag information and inserting this data into OMS using the HTTP Data Collector API.

In the following sections, we'll break down each piece of this architecture and see what’s involved in creating this type of solution.

Logic App

The core of our orchestration logic leverages a Logic App that handles reading information from Azure resources, determining if they are the correct type, and finally making calls to insert this data (via Azure Function, discussed below). Logic Apps are perfect for this type of work as they allow you focus on the core logic instead of debugging / testing integration points in your code. More details on Logic Apps can be found here.

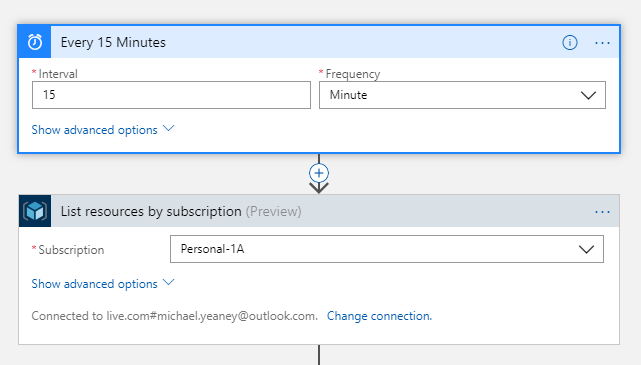

For this sample, the heart of the Logic Apps workflow is a schedule-driven trigger that lists all Azure resources every 15 minutes. This is straightforward, and makes up the first 2 steps of our workflow:

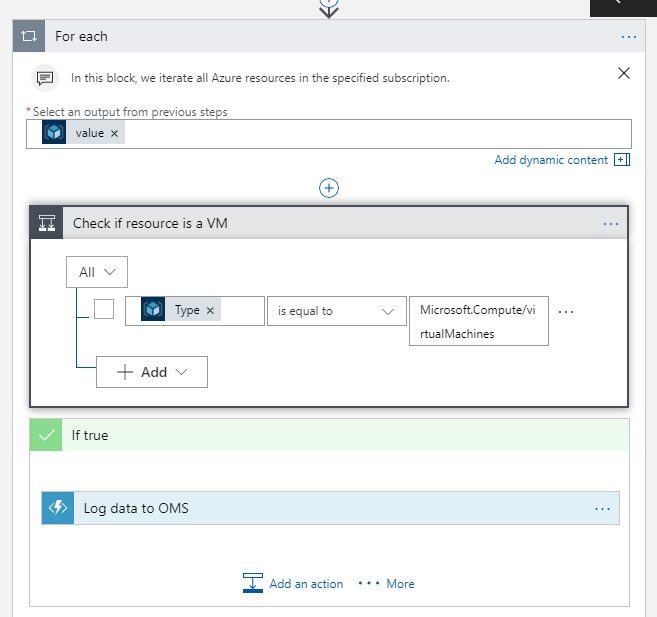

Next, we’ll iterate over each of these resources and look for resources that are virtual machines. Note that (by default) For-Each loops in Logic Apps run in parallel, so we are getting some extra efficiency goodness by letting the platform manage and schedule this work for us. This is a huge benefit when you start comparing the effort to create this type of execution environment on your own.

Note the filter on resource type of "Microsoft.Compute/virtualMachines" to make sure we’re only working with virtual machines; you could change this to look for any resource type needed.

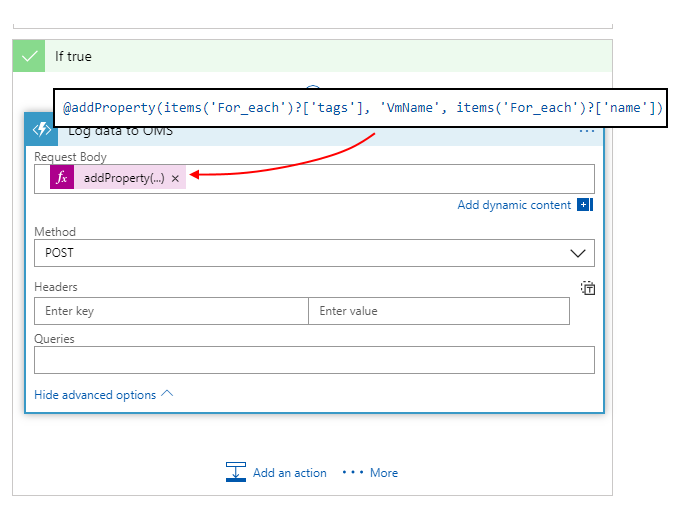

Finally, we’ll call an Azure Function (discussed below) to help us insert this data into OMS leveraging the HTTP Data Collector API. Calling an Azure Function from Logic Apps is natively supported, and only requires us to specify the payload we want to send:

In this case, I’m simply grabbing the "Tag" JSON from the resource manager description. However, note that I’m adding a runtime property named "VmName" to this structure so that OMS knows what VM these tags belong to. While this expression may look a bit tedious, this is simple to setup in Logic Apps using the Expression editor.

Function App

Azure Functions are the perfect partner to Logic Apps for custom extensibility points that require code or other logic that isn't possible within Logic Apps. In this case, the Function app handles the single task of inserting a JSON payload into OMS. This requires some standard code to build up a HMAC signature in order to correctly authenticate our POST call to insert data.

To create our function, we'll add a new HTTP-triggered function, and select "C#" for the language. Sample code is shown below - be sure to supply your workspace ID and primary (or secondary) access key so the function is able to write data to your OMS workspace:

using System;

using System.Net;

using System.Net.Http;

using System.Net.Http.Headers;

using System.Security.Cryptography;

using System.Text;

using System.Threading.Tasks;

// Update customerId to your Log Analytics workspace ID

static string customerId = "--workspace ID--";

// For sharedKey, use either the primary or the secondary Connected Sources client authentication key

static string sharedKey = "-- Primary or Secondary Key--";

// LogName is name of the event type that is being submitted to Log Analytics

static string LogName = "CustomTagData";

public static async Task<HttpResponseMessage> Run(HttpRequestMessage req, TraceWriter log)

{

log.Info("Starting OMS proxy execution...");

// Create a hash for the API signature

var datestring = DateTime.UtcNow.ToString("r");

var rawPayload = await req.Content.ReadAsStringAsync();

var jsonBytes = Encoding.UTF8.GetBytes(rawPayload);

var stringToHash = "POST\n" + jsonBytes.Length + "\napplication/json\n" + "x-ms-date:" + datestring + "\n/api/logs";

var hashedString = BuildSignature(stringToHash, sharedKey);

var signature = "SharedKey " + customerId + ":" + hashedString;

// Insert data in OMS

PostData(signature, datestring, rawPayload, log);

// Indicate success

return req.CreateResponse(HttpStatusCode.OK, "Successful");

}

// Build the API signature

public static string BuildSignature(string message, string secret)

{

var encoding = new System.Text.ASCIIEncoding();

byte[] keyByte = Convert.FromBase64String(secret);

byte[] messageBytes = encoding.GetBytes(message);

using (var hmacsha256 = new HMACSHA256(keyByte))

{

byte[] hash = hmacsha256.ComputeHash(messageBytes);

return Convert.ToBase64String(hash);

}

}

// Send a request to the POST API endpoint

public static void PostData(string signature, string date, string json, TraceWriter log)

{

try

{

var url = "https://" + customerId + ".ods.opinsights.azure.com/api/logs?api-version=2016-04-01";

var client = new System.Net.Http.HttpClient();

client.DefaultRequestHeaders.Add("Accept", "application/json");

client.DefaultRequestHeaders.Add("Log-Type", LogName);

client.DefaultRequestHeaders.Add("Authorization", signature);

client.DefaultRequestHeaders.Add("x-ms-date", date);

var httpContent = new StringContent(json, Encoding.UTF8);

httpContent.Headers.ContentType = new MediaTypeHeaderValue("application/json");

Task<System.Net.Http.HttpResponseMessage> response = client.PostAsync(new Uri(url), httpContent);

var responseContent = response.Result.Content;

var result = responseContent.ReadAsStringAsync().Result;

log.Info("Return Result: " + result);

}

catch (Exception excep)

{

log.Info("API Post Exception: " + excep.Message);

}

}

Note this code is only for a proof-of-concept, and should be modified/hardened as appropriate for your specific environment.

Log Analytics Queries

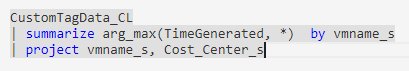

Now that we have the data in Log Analytics, we are ready to query it and make use of it. As we can see from the above code, we are capturing every tag value along with the VM name associated with the tags, every 15 minutes. Noting the special naming that Log Analytics uses for custom data collection (notably, appending “_CL” after the table name), we can query for the most recent tag values for each VM using the following query syntax:

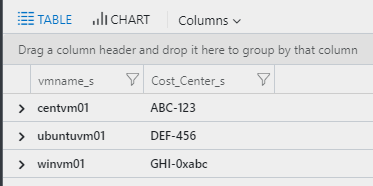

Why do we need to look for the latest value? Since we are logging tag values every 15 minutes, we're only really interested in the latest values - sorting by the TimeGenerated column gives us the latest set of data recorded (otherwise we would end up with duplicates). Using this data, we can now build a reference table that can be used to drive alers / etc. from with your Log Analytics queries - and example of this reference table output is shown below:

Wrapping Up

In this simple example, we've seen how to leverage Azure Logic Apps and Functions to build a robust, scalable data ingestion pipeline that could easily be modified to work for all sorts of data. Additionally, this is also an excellent use of platform services to bolster your operational efficiency, while gaining all the benefits of a fully managed platform. I'll be covering some more use-cases in upcoming posts - but would love to hear what you've built in the meantime. Comment below or ping me on Twitter - looking forward to the discussion!